Modelling "What the hell", my thoughts on chatGPT

Getting to know this awesome artificial intellgence

“Keep it mind, GPT-3 isn’t pulling this information from a database — it’s generating it!” Exploring GPT-3

During this book, I have talked about artificial intelligence, I have said about works on spiking neural networks that could make us closer to the brain, from communicating with machines in a natural language, even needing no language actively at all. The core idea is: once we realize and model how the brain works biologically, we could not just replicate the brain capability, we may also communicate with it, like we use computer languages to communicate with computers. Computer languages, such as Typescript we have used on this book, are ways to tell computers what to do, and even get responses back in formats we understand such as JSON and words.

Essentially, loosely speaking, the brain is a biological computer: it “stores” information, it processes sensory information, it gives calculations back on inputs, and more. From a general perspective, like there is no difference from a bird from a plane, there is no difference between a computer and a brain.

Recently, we had a great breakthrough, still being digested by the AI community, but worth mentioning it! This is called chatGPT, write this name down, it is everywhere! Even outside the computer science community. The core idea is precisely that: people can use AI without needing code experience.

I am going to interview the chatbot, but before, let me leave you my thoughts on the matter. Even though it seems not better anymore, let me leave you human’s thoughts and impressions.

Before we start, let me recommend a book about GPT from openAI, the core of chatGPT [34]: I am using insights from this book. chatGPT is a chatbot using GPT as engine; a chatbot is a bot created to talk to humans. Even though chatGPT is not opened, this engine is. You can easily add to your app, even Nodejs.

In Brazil, not sure everywhere else since local legislations may create barriers, but we had a massive usage of bots on costumer interactions. At least for me, the bots are better in most cases. In one case, an information it took me months to have, using humans, it took me seconds to obtain using bots from the bank. See that those bots are not even close to chatGPT, they are child’s toys when compared.

Let me give you a context, which you can explore more on the book, on the last pages. The information provided here must be seen as incipient since the technology is still on the road.

When Google was launched, new horizons were opened, like Google Trends, which has actually been used on data science, see book from Seth Stephens-Davidowitz [35].

When the first models of neural networks were launched, the expectations were high, until limitations were found. When deep learning was achieving its current state, also the results were tremendous. Image segmentation jumped to about 5% of error, which is quite impressive. By the way, chatGPT is deep learning as we are going to see.

My point is:

We do no know yet for sure the impacts.

Now, chatGPT is closed, and it is quite heavy to keep around your room, or even company. The biggest change will be when this technology will be freely available, like deep learning with TensorFlow.js (Google) as we saw on this book. Imagine, anyone building those bots. It is partially possible with GPT Playground: you play, and when it is ready, you ask for the code, in several languages. It is an API: no need of heavy computers. Just make HTTP calls, and see your web apps becoming smarter and smarter.

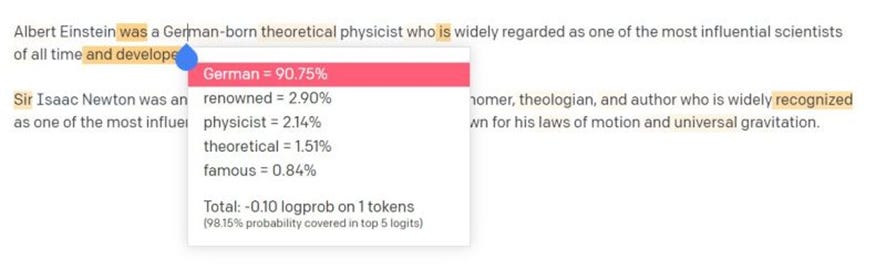

Below is a schema on “how chatGPT thinks”:

it considers the probability two words will be together in a same text.

It was shown in neuroscience: we also make similar move. When you read, talk, and even listen, you consider the probability of words and context. When you hear something out of context, you make that “walk up look”: what the ****??

Maybe, even if that was not the main goal of chatGPT:

It may have modeled somehow “context knowledge”, which we do all the time. And that was a big deficiency from classical models.

As we can see from an excerpt from the official documentation: The nice effects that we see, in which it mimics humans, was “an accident”. They are not modeling that as we would expect. I myself, as I started to study, I got somehow disappointed. Of course, “ignorance is bliss”! I had to open the magic box, and see what is inside!

“These models were trained on vast amounts of data from the internet written by humans, including conversations, so the responses it provides may sound human-like. It is important to keep in mind that this is a direct result of the system’s design (i.e. maximizing the similarity between outputs and the dataset the models were trained on) and that such outputs may be inaccurate, untruthful, and otherwise misleading at times.” Why does the AI seem so real and lifelike?

By no means I want to diminish the developers’ work, but this model is not alone on the breakthrough. It called so much attention, I believe, because it is where humans are so conservative about: their humanity! Models now can creative audiobooks with human voices, they can create images from text, they can fake videos, they can recognize people on videos and photos.

==

Part of the book: “Computational Thinking”